Hello! I’m an Assistant Project Scientist in the UC Davis Phonetics Lab, REC Scholar with the UC Davis Alzheimer’s Disease Center (ADRC), and Specialist in the UCSF Fein Memory and Aging Center in the Vonk Lab. I received my Ph.D in Linguistics in 2018 from UC Davis. [bio]

My research program tests the cognitive mechanisms that shape speech interactions. I am particularly interested in how people produce, perceive, and learn speech patterns with other people vs. voice technology. These interactions can reveal how people reason about others (social cognition) as well as the flexibility of our language systems.

I am also interested in clinical translation: testing whether features of spoken language can serve as markers of cognitive impairment, and examining how voice technology can better support older adults in maintaining independence.

Broadly, my research focus is in the areas of psycholinguistics, phonetics, and human-computer interaction using experimental methods to probe speech behavior. For an overview of papers, see Research. You can also out some of my public science communication below: Public Outreach and Press!

Contact

mdcohn at ucdavis dot edu

michelle dot cohn at ucsf dot edu

she/her/hers

News

- 4/24: Paper accepted to IEEE Engineering in Medicine and Biology Society (EMBC)

- 3/23: Speech markers of cognitive impairment and APOE-ε4 status accepted as AAIC Poster

- 3/12: 5 mentee posters accepted to the UC Davis Undergraduate Research Conference

- 2/26: Paper accepted to CHI 2026: Challenges in Automatic Speech Recognition for Adults with Cognitive Impairment

- 1/26: Awarded UC Davis/CITRIS Health: Digital Health/AI Seed Grant ($50k)

- 1/9: Awarded Translated Imminent Grant ($20k)

- 1/5: Specialist Position with the Vonk Lab at the UCSF Memory and Aging Center

- 11/18: Invited colloquium at UW Milwaukee

- 11/15: Two mentee posters at CAMP8!

- 11/13: Awarded a Google Research Gift

- 7/30: Presented poster @ Alzheimer’s Association International Conference (AAIC) on potential speech biomarkers

- 5/30: Yvonne Becker Colloquim Speaker @ Simon Fraser University

- 5/5: Poster @ National Alzheimer’s Coordinating Center (NACC) Spring Meeting

- 4/25: Two mentee posters @ UC Davis Undergraduate Research Conference

- 4/24: 2025 Take Our Children To Work Day

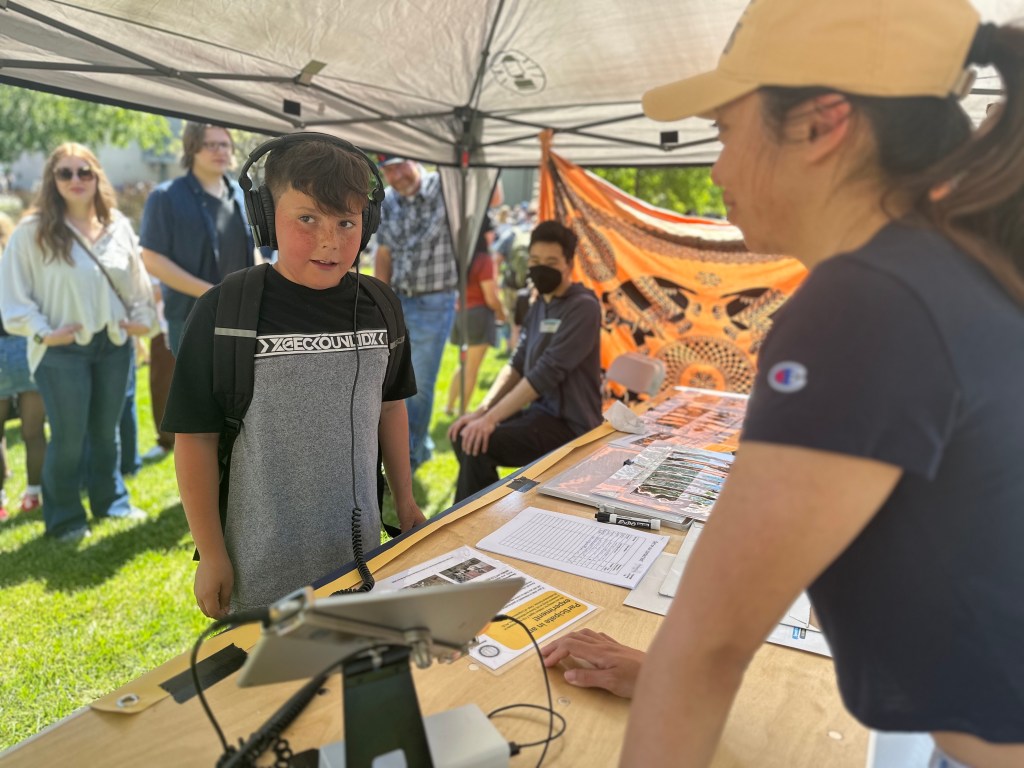

- 4/12: UC Davis Picnic Day 2025: Speech Science Booth!

For all updates, see Recent posts

1. How do people talk to technology?

Our experiments have found that speakers adapt the acoustic properties of their speech (speech rate, pitch, intensity) when they are talking to a voice assistant, compared to another person. But many of the ways speakers adapt to local communicative contexts (e.g., a misunderstanding, mark focus, emotion) appear to be parallel in human-human and human-computer interaction.

- Cohn, M., Barreda, S., Graf Estes, K., Yu, Z., & Zellou, G. (2024). Children and adults produce distinct technology- and human-directed speech. Scientific Reports. [OA article]

- Cohn, M., Mengesha, Z., Lahav, M., & Heldreth, C. (2024). African American English speakers’ pitch variation and rate adjustments for imagined technological and human addressees. Journal of Acoustical Society of America (JASA) Express Letters, 4(4). [OA Article]

- Cohn, M., Bandodkar, G., Sangani, R., Predeck, K., & Zellou, G. (2024). Do People Mirror Emotion Differently with a Human or TTS Voice? Comparing Listener Ratings and Word Embeddings. Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems. Honolulu, United States. [pdf][video][poster]

- Vonessen, J., Aoki, N., Cohn, M., & Zellou, G. (2024). Comparing perception of L1 and L2 English by human listeners and machines: Effect of interlocutor adaptations. Journal of the Acoustical Society of America. [OA article]

- Cohn, M., Ferenc Segedin, B., & Zellou, G. (2022). The acoustic-phonetic properties of Siri- and human-DS: Differences by error type and rate. Journal of Phonetics. [OA Article]

- Beier, E., Cohn, M. (co-first authors), Trammel, T., Ferreira, F., & Zellou, G. (accepted). Marking Prosodic Prominence for Voice-AI and Human Addressees. Journal of Experimental Psychology: Learning, Memory, and Cognition. [PsyArXiv]

- Cohn, M., & Zellou, G. (2021). Prosodic differences in human- and Alexa-directed speech, but similar error correction strategies. Frontiers in Communication. [OA Article]

- Cohn, M., Liang, K., Sarian, M., Zellou, G., & Yu, Z. (2021). Speech rate adjustments in conversations with an Amazon Alexa socialbot. Frontiers in Communication [OA Article]

- Cohn, M., Predeck, K., Sarian, M., & Zellou, G. (2021). Prosodic alignment toward emotionally expressive speech: Comparing human and Alexa model talkers. Speech Communication. [OA Article]

- Perkins Booker, N., Cohn, M., & Zellou, G. (2024). Linguistic Patterning of Laughter in Human-Socialbot Interactions. Frontiers in Communication, 9, 1346738

- Zellou, G., Cohn, M., & Kline, T. (2021). The Influence of Conversational Role on Phonetic Alignment toward Voice-AI and Human Interlocutors. Language, Cognition and Neuroscience [Article][pdf]

- Cohn, M., & Zellou, G. (2019). Expressiveness influences human vocal alignment toward voice-AI. Interspeech [pdf]

2. How do people perceive text-to-speech (TTS) voices?

We’ve found that TTS voices carry important social information, including apparent age, gender, and emotion. People use this information to guide their interactions with technology, often applying the social ‘rules’ from human-human interaction. Yet, direct comparisons with human voices — or apparent human voices — shows that top-down expectations shape how TTS voices are perceived.

- Cohn, M., Pushkarna, M., Olanubi, G., Moran, J., Padgett, D., Mengesha, Z., & Heldreth, C. (2024). Believing Anthropomorphism: Examining the Role of Anthropomorphic Cues on Trust in Large Language Models. Proceedings of the 2024 CHI Conference on Human Factors in Computing Systems. Honolulu, United States. [pdf][video][poster]

- Cohn, M. & Zellou, G. (2020). Perception of concatenative vs. Neural text-to-speech (TTS): Differences in intelligibility in noise and language attitudes. Interspeech [pdf] [Virtual Talk]

- Aoki, N., Cohn, M., & Zellou, G. (2022). The clear speech intelligibility benefit for text-to-speech voices: Effects of speaking style and visual guise. Journal of Acoustical Society of America (JASA) Express Letters. [OA Article]

- Cohn, M, Sarian, M., Predeck, K., & Zellou, G. (2020). Individual variation in language attitudes toward voice-AI: The role of listeners’ autistic-like traits. Interspeech [pdf] [Virtual talk]

- Zellou, G., Cohn, M., & Block, A. (2021). Partial compensation for coarticulatory vowel nasalization across concatenative and neural text-to-speech. Journal of the Acoustic Society of America [Article]

Block, A., Cohn, M., & Zellou, G. (2021). Variation in Perceptual Sensitivity and Compensation for Coarticulation Across Adult and Child Naturally-produced and TTS Voices. Interspeech. [pdf] - Cohn, M., Raveh, E., Predeck, K., Gessinger, I., Möbius, B., & Zellou, G. (2020). Differences in Gradient Emotion Perception: Human vs. Alexa Voices. Interspeech [pdf] [Virtual talk]

- Cohn, M., Chen, C., & Yu, Z. (2019). A Large-Scale User Study of an Alexa Prize Chatbot: Effect of TTS Dynamism on Perceived Quality of Social Dialog. SIGDial [pdf]

- Gessinger, I., Cohn, M., Möbius, B., & Zellou, G (2022). Cross-Cultural Comparison of Gradient Emotion Perception: Human vs. Alexa TTS Voices. Interspeech [pdf].

- Zhu, Q., Chau, A., Cohn, M., Liang, K-H, Wang, H-C, Zellou, G., & Yu, Z. (2022). Effects of Emotional Expressiveness on Voice Chatbot Interactions. 4th Conference on Conversational User Interfaces (CUI). [pdf]

3. How do people learn language from technology?

In human-human interaction, there are subconscious mechanisms that shape how we learn language. We learn the mapping between our accent and another speaker’s to understand a new phonological system. We also adopt the features of a language in our own speech. We’ve found that the type of talker— as an apparent human or a voice assistant — shapes how people learn an accent and how they mirror another speaker’s pronunciation patterns.

- Zellou, G., Cohn, M., & Pycha, A. (2023). The effect of listener beliefs on perceptual learning. Language. [pdf]

- Ferenc Segedin, B. Cohn, M., & Zellou, G. (2019). Perceptual adaptation to device and human voices: learning and generalization of a phonetic shift across real and voice-AI talkers. Interspeech [pdf]

- Cohn, M., Keaton, K., Beskow, J., & Zellou, G. (2023). Vocal accommodation to technology: The role of physical form. Language Sciences 99, 101567. [OA Article]

- Cohn, M., Jonell, P., Kim, T., Beskow, J., & Zellou, G. (2020). Embodiment and gender interact in alignment to TTS voices. Cognitive Science Society [OA Article] [Virtual talk]

- Cohn, M., Ferenc Segedin, B., & Zellou, G. (2019). Imitating Siri: Socially-mediated vocal alignment to device and human voices. ICPhS [pdf]

- Dodd, N., Cohn, M., & Zellou, G. (2023). Comparing alignment toward American, British, and Indian English text-to-speech (TTS) voices: Influence of social attitudes and talker guise. Frontiers in Computer Science, 5. [Article]

- Zellou, G., Cohn, M., & Ferenc Segedin, B. (2021). Age- and gender-related differences in speech alignment toward humans and voice-AI. Frontiers in Communication [OA Article]

- Zellou, G., & Cohn, M. (2020). Top-down effects of apparent humanness on vocal alignment toward human and device interlocutors. Cognitive Science Society [pdf]

4. Clinical features and language technology

As voice-AI becomes part of daily life, spoken language offers a low-burden, noninvasive window into cognitive function — both in human–human and human-computer interaction. I investigate whether acoustic and linguistic features of speech can serve as reliable, scalable markers of clinical characteristics, such as cognitive impairment. This is the focus of my work at UCSF and as a REC Scholar at the UC Davis Alzheimer’s Disease Research Center (ADRC).

I also evaluate how automatic speech recognition (ASR) systems (which transcribe speech > text) perform for clinical populations, identifying where current technologies fail and how they can be made more accessible and clinically robust.

- Challenges in Automatic Speech Recognition for Adults with Cognitive Impairment. (to appear). Cohn, M., Lanzi, A., Ishihara, Y., Chuah, C., Zellou, G., Weakley, A. Conference on Human Factors in Computing (CHI) 2026. Barcelona, Spain. [arxiv]

- Cohn, M., & Weakley, A. (2025a). Speech biomarkers of cognitive impairment in technology-directed tasks. [Poster]. National Alzheimer’s Coordinating Center (NACC) Spring Meeting. San Francisco, CA, United States.

- Cohn, M., Lanzi, A., & Weakley, A. (2025b). Speech rate as a biomarker of cognitive impairment in technology-directed tasks. [Poster]. Alzheimer’s Association International Conference (AAIC). Toronto, Canada.

- Cohn, M, Sarian, M., Predeck, K., & Zellou, G. (2020). Individual variation in language attitudes toward voice-AI: The role of listeners’ autistic-like traits. Interspeech [pdf] [Virtual talk]

- Snyder, C. Cohn, M., & Zellou, G. (2019). Individual variation in cognitive processing style predicts differences in phonetic imitation of device and human voices. Interspeech [pdf]

Public Outreach

UC Davis Picnic Day: Speech Science Booth

- Picnic Day 2025

- Picnic Day 2024

- Picnic Day 2023

- Picnic Day 2021 (Virtual Booth)

- Picnic Day 2020 (Virtual Booth) + Speech Science with Gunrock (Virtual Booth)

Take our Children to Work (TOC) Day

- 2023: We hosted families at the UC Davis Phonetics Lab for the 2023 Take Our Children to Work Day on April 27th. Children and adults participated in a real speech science experiment and learned more about the research we do at the PhonLab!

- 2022: Virtual Event

Press

- Across Acoustics [podcast]: “Why don’t speech recognition systems understand African American English?” (7/9/24)

- New Scientist [article] “ChatGPT got an upgrade to make it seem more human” (5/14/24)

- AIP Publishing [article] “Machine Listening: Making Speech Recognition Systems More Inclusive” (4/30/24)

- Unfold Podcast [podcast] “Hey Siri, Why Do I Speak Differently to You?” (9/19/23)

- StudyFinds [article]: AI baby talk? People change their voice while talking to Alexa and Siri (5/9/23)

- EurekaAlert [article] Hey Siri, can you hear me? (5/9/23)

- KCBS San Francisco (106.9FM AND 740AM)[article] [recording] Live Interview with Rebecca Corral “UC Davis conducts study on how wearing a mask affects our speech patterns” (2/3/21)

- CBS-13 Sacramento On-Air News Segment [article] [video] “UC Davis Study Finds Face Masks Do Not Impact Ability To Communicate” (2/2/21)

- UC Davis Press Release [article] “Speaking and Listening Seem More Difficult in a Masked World, But People Are Adapting” (2/2/21)

- In Focus [article]. “Speech Unmasked: UWM linguist studies how masks impact intelligibility” (3/2021)

- CBS 58 Milwaukee On-Air News Segment [article/video]. “Researchers look at how masks impact communication (3/18/21)

- Equinox [article] “Unmask Masked Speech” (3/6/21)

- US News & World Report [article] “As Mask-Wearing Prevails, People Are Adapting to Understanding Speech” (2/8/21)

- Ladders [article] “This is how you can make masked conversations 100% more successful” (2/8/21)

- WFMY Greensboro On-Air News Segment [article] [video] “What did you say? How masks affect your communication & understanding” (2/4/21)